Blog Anticipatory Design: Understanding User Needs

As a User Experience (UX) researcher, I’ve been perplexed at how most software is not even close to what users need when you start to test it with real people — even seemingly best practice, nice and simple designs. Teams talk about, collaborate and pow-wow for great experiences, but when things are actually put it in front of a person, it’s a totally different story — and the planned experience begins to unravel as unexpected use cases come to light.

By Sarah Auvil / 6 Apr 2017 / Topics: Customer experience

Certainly, when I meet face to face or remotely with participants, they're all capable humans who have navigated complex challenges in life and have rich experiences and creative thoughts. The human brain is amazing, so why is there such a gap in software? How can an individual who provides for children and has a mortgage miss that the button’s clearly disabled? I’ve observed even very technically savvy people make errors that seem obvious.

This gap exists in what humans are good at, how we communicate versus how machines actually work and respond to us.

Humans navigate the world using touch, facial expressions, sight, sound, gestures, smell and taste. Even today, we mainly get information through talking with other people. Many people don’t scour interfaces for clues when they need help. They seek out a family member or co-worker and ask.

How do people actually communicate?

That homo sapiens possess superior communication and information-processing skills underlies our entire society and existence — reading the information in this very blog post is evidence of advanced human communication.

Humans are social learners. We share information by talking, as well as through many other cues. Body language, hand gestures, eye contact, facial expressions, volume and tone in our voices can send various messages. We also learn by trying things, watching others and seeing the results.

Written language isn't as natural to us as speaking. It takes a lot of intentional effort (years) to learn to read and write, whereas we learn to speak our local language(s) without any additional formal education.

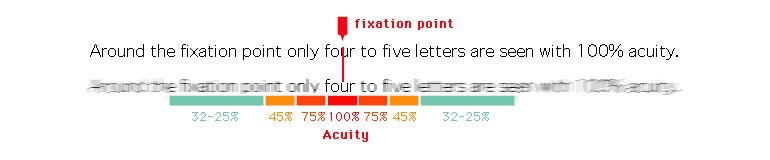

Humans also use a very small part of their field of vision to read, known as the fovea on the back of the retina. Outside of that small magnifying glass of focus, letters look very blurry to us — creating problems when humans use interfaces that have a lot going on or require noticing items that are far apart.

What about accomplishing tasks?

When testing a design, UX researchers are often most interested in task analysis: Can the user confidently get from A to B? Tasks have two parts: 1) motivation — does the user actually want to do it? and 2) friction — is it hard to do? Usability tests usually spend very little time assessing what people actually want to do on an interface on their own since task scenarios are written and directed to users.

It’s tempting to put a big check mark on the project if something is technically usable, but human motivation often doesn't fit into a series of neat check boxes. Sometimes people are unpredictable, have misconceptions about the software or simply lack interest in using it. For these reasons, I recommend starting with contextual inquiry (watching people in their natural environment) and analytics to better understand real-world motivation. And then use usability testing for understanding friction.

The challenge from a humans-doing-things perspective is not only if it’s easy, but that many people don’t always do things in logical, sequential paths. Software should be designed with the flexibility that the user will click, tap or do something the project team may not expect.

Applying anticipatory design to meet user needs

Software most commonly uses pass/fail logic and loops ("for," "while" and "if" statements). The interface presents info to the user, but technology can’t gauge the user’s reaction, confusion or skill level in regard to what's presented. It can shoot them a few errors afterward, at best.

The challenge for building software is that the computer is not smart enough to know when the user is going off the rails without planning. That’s where anticipatory design comes in — a process where you meet user needs by streamlining the number of steps involved to provide the user what they need before the need is communicated.

Isn’t it interesting that most companies still have customer service representatives to help people use their website or app? And that the person will talk through each screen with the person, and even demonstrate through screen sharing how to do it?

It speaks volumes that reading a large screen of information isn't how people naturally make decisions, but talking to someone back and forth and watching them do it are very familiar, even to people who aren't technical at all. Great product teams and UX practitioners watch real people and engage customer service reps when available rather than making assumptions about how people will use something.

In the future, when someone uses technology, artificial intelligence could seek to understand the user’s questions and familiarity and even customize a dynamic user experience for them — and use more of these human communication-based cues, such as live communication.

Understanding each user's unique challenges

In order to create dynamic experiences, you have to understand where one user may excel and another might get stuck. Most products have to accommodate a variety of technical levels, but few do this well. During persona development, see if you can add some contextual shadowing sessions to see your users in the scenarios you’re designing for. Then:

- Create a workflow for your power user to quickly make his or her selections.

- Create a workflow for a user who doesn’t understand the subject matter well and can’t input the information without assistance or doesn’t understand why/if he or she would need to enter it.

Where can business and design do the work?

Once your use cases are thought through and documented, think about shortcuts and how information could be reduced to create a more dynamic experience:

- If you provide one piece of information, consider what other information could be drawn from it and prefilled.

- If a use case doesn’t have an option for item X, this scenario information should not be presented.

- Users should only make decisions when there’s an impact; don’t make people think or do duplicate data entry (when they shouldn’t have to).

True equal access

You can’t talk about software for humans without accessibility.

Current technology mainly relies on human vision with occasional audio cues, but most software and web experiences don’t include audio at all. Touch and gestures are a bit of a misnomer in our biological context because many touch devices’ apps still rely on visuals (it’s a flat, non-tactile piece of glass) and gesture interactions are learned for each device, not a natural part of human society.

Almost all interface content is designed primarily for seeing, hearing and non-learning-impaired people. Unfortunately, in many circles, complying with the Americans With Disabilities Act (ADA) on the business side is more about avoiding lawsuits than truly creating equal access. I hope in the future, interpretive technology can be used to unlock full communication and access and to break down barriers.

Some early wearable prototypes have been made to attempt to translate American Sign Language (ASL), but since the language uses facial expressions, body language and even depth of sign to convey meaning, sign to speech is tricky.

There are apps now that can translate languages, but these early forms have been a pretty terrible experience. In the future, maybe they can better pick up on context, culture and idioms and work in real time. Perhaps artificial intelligence and better design could even fill in more gaps in face-to-face human communication.

Technologists, be mindful.

The more I research and have conversations about this topic, the more it feels like holding up a mirror. Artificial intelligence and futuristic software will carry over their designer’s assumptions and ideas — both good and bad.

As facilitators of human-computer interactions, we can never forget the human users of software. The intent of technology should be to increase quality of life. When it’s used to leave people behind or replace them, or made without accessibility, the consequences can be disastrous.

Technologists, continually self-educate, think like anthropologists and create accessible apps.

Monthly perspectives from global tech leaders.

Monthly perspectives from global tech leaders.