Tech tutorials Intro to Docker Swarm Mode and Azure — Part 2

By Insight Editor / 28 Nov 2018 , Updated on 16 May 2019 / Topics: Application development Microsoft Azure

By Insight Editor / 28 Nov 2018 , Updated on 16 May 2019 / Topics: Application development Microsoft Azure

In part 1, I introduced Docker swarm mode, explained how to create a local swarm cluster and showed it in action. This should have given you enough to get started, but typically when you want to get more serious about running containers in a development or production environment, you can’t have things running locally. That’s where Azure comes into play.

The two main ways to go with regard to deploying containers in Azure are Azure Container Service (ACS) or Docker for Azure. Both offerings make it easier to create, configure and manage Virtual Machines (VMs) that are preconfigured to run containers.

If you’re interested in using something else besides Docker for container orchestration, ACS will also allow you to use Marathon and the Distributed Cloud Operating System (DC/OS) or Kubernetes. For this article, I’ve chosen Docker for Azure simply because ACS doesn’t support Docker swarm mode (as of the writing of this blog) and is a bit more intuitive to use.

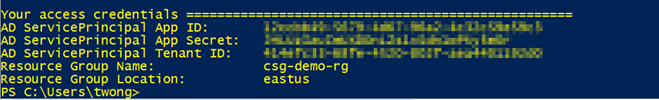

> docker pull docker4x/create-sp-azure:latest

> docker run -ti docker4x/create-sp-azure [sp-name] [rg-name] [rg-region]

> cat /etc/resolv.conf

> docker node ls

> ssh <node-hostname><internal-domain-name>

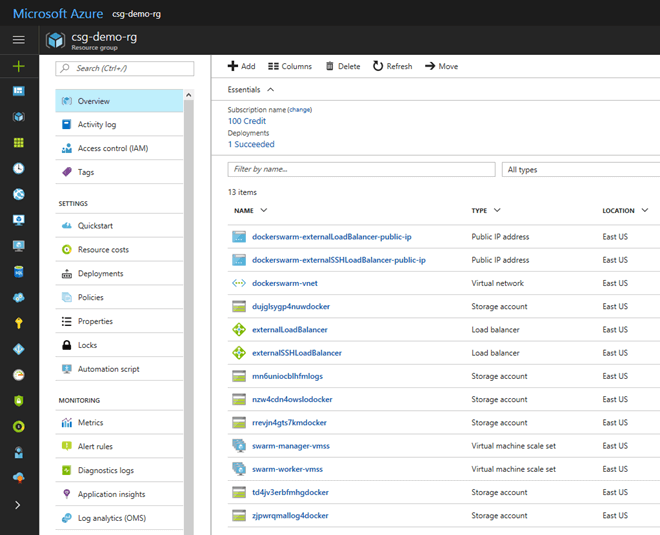

So now you have a Docker swarm cluster up and running in Azure. From here, feel free to deploy some services and test things out. Don’t forget to delete everything when you’re done. Otherwise, you’ll keep getting charged. The easiest way to do that is to delete the resource group.